1. The Problem: Why Benchmarking Weather Models is Broken

"All models are wrong, but some are useful." ~ George Box

Weather forecasting has always been a game of accuracy, but how do we know which model performs best? Today, most weather models are evaluated against gridded datasets, such as analysis and reanalysis products (e.g., ECMWF ERA5, NOAA CFSR). While these grids provide a structured, global perspective, they introduce systematic biases that make benchmarking problematic.

The Challenge of Grid-Based Benchmarking

- Reanalysis models assimilate observations but are not "true" ground truth.

- Interpolation smooths out local weather extremes, missing key variations.

- Grids are often biased toward certain physical assumptions and resolutions.

- They may not represent the actual weather conditions experienced at specific locations.

Additionally, specific reanalysis products, such as ERA5, have their own unique issues, including discontinuities caused by their 12-hour assimilation windows (running from 09:00 to 21:00 UTC and 21:00 to 09:00 UTC). These windows can introduce systematic errors in variables like near-surface temperature and winds, further complicating accurate benchmarking.

For many applications—like power forecasting for renewable energy, agriculture, and transportation—what truly matters is how accurate a forecast is at a specific location, not over an abstracted grid. A wind farm, for example, relies on real weather conditions at its location, not a reanalysis grid covering a 25 km radius.

2. Enter StationBench: A New Approach to Weather Forecast Evaluation

StationBench changes the game by offering station-level benchmarking—a more realistic and granular way to assess model accuracy. Instead of comparing against reanalysis datasets, it evaluates forecasts directly against real-world weather station observations.

🔑 Key Advantages of Station-Level Benchmarking

✅ True Ground Truth – Uses actual meteorological station data instead of smoothed interpolations.

✅ Captures Local Weather Extremes – Reflects real conditions, including microclimates.

✅ Better for Renewable Energy Forecasting – Essential for solar and wind power predictions.

✅ Fairer Model Comparisons – Accounts for local biases and station-specific errors.

3. How StationBench Works

StationBench is a Python-based library that makes it easy to evaluate and compare weather models against station-level truth data.

📌 Key Features

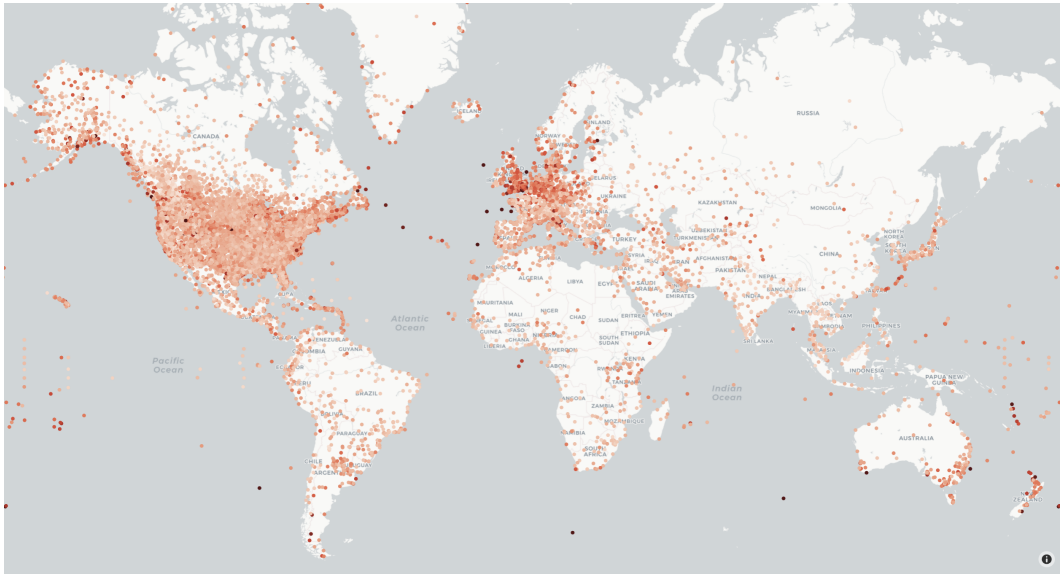

- Pre-processed Ground Truth Data – Curated observations from 10,000+ stations worldwide from the Meteostat dataset.

- Comprehensive Forecast Metrics – RMSE, bias error and skill scores

- Multi-Region Support – Evaluate accuracy across different geographies.

- Integration with Major Forecast Models – Works with ECMWF, NOAA, and other providers.

- Easy Python & CLI Interface – Simple to use, whether you're a researcher or practitioner.

🛠️ Quickstart Example

import stationbench

# Run a forecast evaluation

stationbench.calculate_metrics(

forecast="path/to/forecast_data.zarr",

start_date="2023-01-01",

end_date="2023-12-31",

output="metrics_output.zarr",

region="europe",

name_10m_wind_speed="10si",

name_2m_temperature="2t"

)

4. Why This Matters for Renewable Energy

Many industries depend on hyper-local weather accuracy:

- 🌞 Solar Power – Solar radiation forecasts must match actual station data.

- 💨 Wind Energy – Wind speed is site-specific and critical.

For these energy industries, forecasting well against real weather is more valuable than performing well on an interpolated dataset.

5. Join the StationBench Community & start Benchmarking Today!

Ready to benchmark smarter and improve your forecasts? Get started with StationBench today!

📌 GitHub: StationBench Repository

📌 Installation: pip install stationbench

📌 Contribute & Discuss: Feel free to open issues or pull requests on GitHub.